Building a Production RAG Pipeline with Bedrock and OpenSearch Serverless

The first RAG pipeline I built in anger was a Saturday afternoon affair: a LangChain notebook, a FAISS index sitting on local disk, and an embedding loop. But as soon as that demo hits the real world, the questions change. How do you handle 10,000 documents? How do you refresh the index without rebuilding from scratch? Who owns the IAM policies? And finally, what is the cost floor?

Amazon Bedrock Knowledge Bases is the enterprise answer to these questions. It takes the "small distributed system" of RAG—the chunking, the embedding pipeline, the vector store provisioning, and the sync logic—and folds them into a managed service.

The Vector Backend Decision Matrix

The default vector backend for Bedrock is OpenSearch Serverless (OSS). It is a fine default, but it is also the most expensive, and understanding the OCU floor matters before you sign your team up for the bill.

| Vector Backend | Cost Floor | Latency | Best For... |

|---|---|---|---|

| OpenSearch Serverless | ~$345/mo (2 OCU min) | Sub-100ms | High traffic, Hybrid Search, standard AWS RAG. |

| S3 Vectors | Pay-per-request | 100ms - 1s | Spiky traffic, indices up to 2 billion vectors (GA Dec 2025). |

| Aurora PostgreSQL | Instance price | Variable | Small datasets, SQL-familiar access patterns. |

| Pinecone / MongoDB | SaaS pricing | Variable | Existing platform investment outside of AWS. |

Security & IAM: The Tripartite Trust Model

The mental model: the Bedrock Service Role is the one doing the work. The Data Access Policy on the OSS collection must explicitly grant that service role permission to touch the collection, because IAM alone is not sufficient.

1. Trust Policy

Lets Bedrock assume the role.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": { "Service": "bedrock.amazonaws.com" },

"Action": "sts:AssumeRole"

}

]

}

2. Permissions Policy

Read S3, invoke embedding model, and write to OSS.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": ["s3:GetObject", "s3:ListBucket"],

"Resource": ["arn:aws:s3:::my-kb-docs", "arn:aws:s3:::my-kb-docs/*"]

},

{

"Effect": "Allow",

"Action": "bedrock:InvokeModel",

"Resource": "arn:aws:bedrock:*::foundation-model/amazon.titan-embed-text-v2:0"

},

{

"Effect": "Allow",

"Action": "aoss:APIAccessAll",

"Resource": "arn:aws:aoss:*:*:collection/*"

}

]

}

Advanced Chunking Strategies

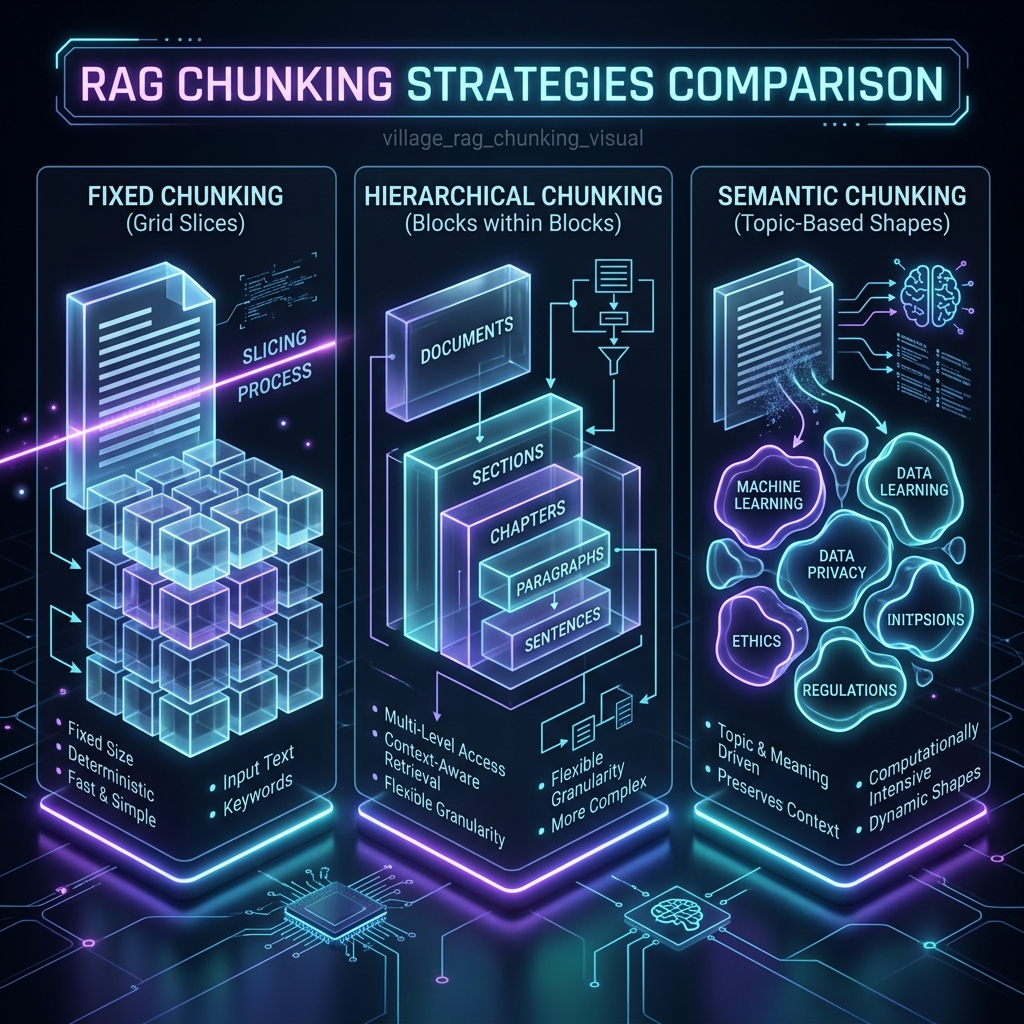

The two knobs that meaningfully affect retrieval quality are the chunking strategy and the embedding model. Choice is more consequential than the documentation suggests.

- Fixed-Size: 300-token slices. Predictable, but splits tables and code blocks.

- Hierarchical: Retrieves on small child chunks but returns the 1500-token parent to the model. Best for technical docs.

- Semantic: Uses an embedding model to detect topic shifts. Highest quality for narrative content, but slowest to compute.

Implementation: The Boto3 SDK Path

Here is the exact code path to stand up a Knowledge Base with Hierarchical Chunking.

import boto3

import time

bedrock_agent = boto3.client('bedrock-agent', region_name='us-east-1')

# Step 1: Create the Knowledge Base

kb = bedrock_agent.create_knowledge_base(

name='company-docs-kb',

description='Internal policy and engineering docs',

roleArn='arn:aws:iam::123456789012:role/BedrockKBRole',

knowledgeBaseConfiguration={

'type': 'VECTOR',

'vectorKnowledgeBaseConfiguration': {

'embeddingModelArn': 'arn:aws:bedrock:us-east-1::foundation-model/amazon.titan-embed-text-v2:0'

}

},

storageConfiguration={

'type': 'OPENSEARCH_SERVERLESS',

'opensearchServerlessConfiguration': {

'collectionArn': 'arn:aws:aoss:us-east-1:123456789012:collection/abc123',

'vectorIndexName': 'company-docs-index',

'fieldMapping': {

'vectorField': 'embedding',

'textField': 'text',

'metadataField': 'metadata'

}

}

}

)

kb_id = kb['knowledgeBase']['knowledgeBaseId']

# Step 2: Attach S3 Data Source with Hierarchical Chunking

ds = bedrock_agent.create_data_source(

knowledgeBaseId=kb_id,

name='company-docs-s3',

dataSourceConfiguration={

'type': 'S3',

's3Configuration': {

'bucketArn': 'arn:aws:s3:::my-kb-docs'

}

},

vectorIngestionConfiguration={

'chunkingConfiguration': {

'chunkingStrategy': 'HIERARCHICAL',

'hierarchicalChunkingConfiguration': {

'levelConfigurations': [

{'maxTokens': 1500},

{'maxTokens': 300}

],

'overlapTokens': 60

}

}

}

)

# Step 3: Kick off the first ingestion job

job = bedrock_agent.start_ingestion_job(

knowledgeBaseId=kb_id,

dataSourceId=ds['dataSource']['dataSourceId']

)

job_id = job['ingestionJob']['ingestionJobId']

# Step 4: (Expert Path) Poll for completion and check statistics

while True:

status = bedrock_agent.get_ingestion_job(

knowledgeBaseId=kb_id,

dataSourceId=ds['dataSource']['dataSourceId'],

ingestionJobId=job_id

)['ingestionJob']

print(f"Status: {status['status']}")

if status['status'] in ['COMPLETE', 'FAILED', 'STOPPED']:

# The Statistics block tells you exactly what failed

stats = status['statistics']

print(f"Ingested: {stats['numberOfDocumentsScanned']}")

print(f"Failed: {stats['numberOfDocumentsFailed']}")

break

time.sleep(30)

Querying the Knowledge Base

Using the retrieve_and_generate API to get grounded answers with citations.

import boto3

runtime = boto3.client('bedrock-agent-runtime', region_name='us-east-1')

response = runtime.retrieve_and_generate(

input={'text': 'What is our policy on remote work for engineering?'},

retrieveAndGenerateConfiguration={

'type': 'KNOWLEDGE_BASE',

'knowledgeBaseConfiguration': {

'knowledgeBaseId': kb_id,

'modelArn': 'arn:aws:bedrock:us-east-1::foundation-model/anthropic.claude-3-5-sonnet-20240620-v1:0',

'retrievalConfiguration': {

'vectorSearchConfiguration': {

'numberOfResults': 5,

'overrideSearchType': 'HYBRID' # Essential for keyword+vector

}

}

}

}

)

print(response['output']['text'])

for citation in response.get('citations', []):

for ref in citation.get('retrievedReferences', []):

print(ref['location'], ref['content']['text'][:120])

The Production Checklist

- Sync Failures: Always monitor the

statisticsblock inget_ingestion_job. Corrupted PDFs will fail silently, leaving gaps in your index. - Metadata Filtering: Use

.metadata.jsonsidecar files in S3. This is mandatory for multi-audience KBs to prevent "vibes-based" disambiguation. - Model Migrations: You cannot swap embedding models in an existing KB. You must create a new KB, re-ingest, and cut over at the application layer.

- Cost Monitoring: OpenSearch Serverless bills by OCU (2 min). A single misconfigured retry loop can burn $1,000 in an afternoon. Use Budget Alarms.

The 2026 Roadmap

The focus is shifting to the edges. S3 Vectors changed the economics for large RAG deployments overnight. AgentCore is increasingly the choice for systems that need to take actions, while Bedrock Data Automation has become the best way to parse complex PDFs with tables and figures.

For multi-modal workloads, Amazon Nova Multimodal Embeddings V1 (3072 dimensions) is the new standard, enabling RAG over product catalogs and manuals where diagrams matter as much as text.

This architecture is unglamorous and well-documented—the only kind that survives the shift from demo to system.