RAG vs. MCP: What Every AI Developer Actually Needs to Know

You've probably built something with an LLM and felt that moment where things just stop working the way you expected. The model feels smart—it reasons well, writes clean code, and breaks down complex ideas—but then reality hits: it has no idea what happened last Tuesday. It can't access your internal docs. It doesn't know your customer's order status or what's in your production database right now.

That's not a skill problem. That's a context problem.

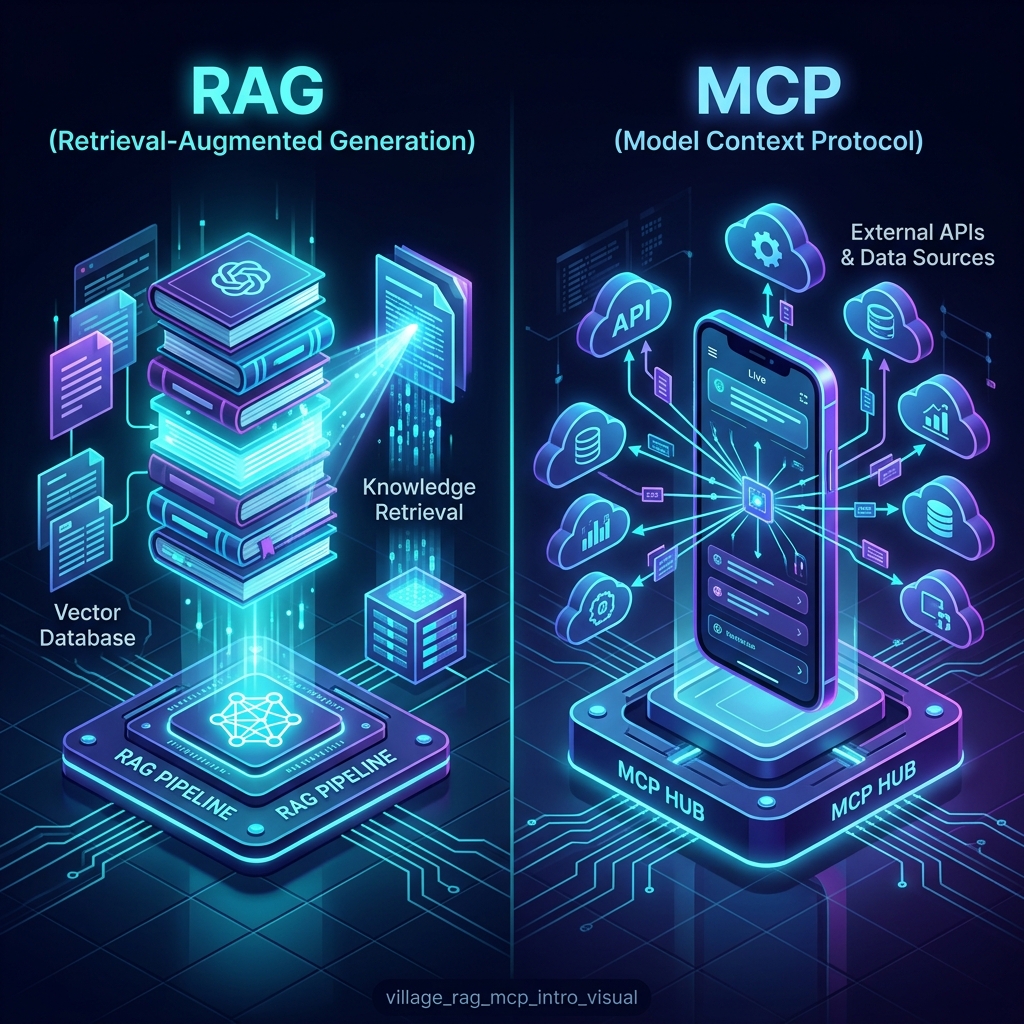

To solve this, two distinct patterns have emerged: RAG (Retrieval-Augmented Generation) and MCP (Model Context Protocol). While they are often discussed as competitors, they solve different layers of the AI context stack.

What RAG Actually Is: The Library

RAG is about Long-term Memory. Instead of relying purely on the model's training data, you pull relevant content from a specific body of documents and hand it to the model as context at query time. Think of it as giving the AI a well-organized library with a high-performance search engine attached.

The Technical Pipeline

- Ingestion: Documents (PDFs, Wikis, Codebases) are split into chunks. Semantic chunking is preferred over fixed-size to preserve natural topic boundaries.

- Embedding: Chunks are converted into vector representations using models like

text-embedding-3. - Retrieval: Similarity search finds the Top-K relevant chunks. Cross-Encoder Rerankers are used as a second pass to filter out noise.

- Generation: The LLM generates a response grounded in that freshly retrieved context.

Limitation: RAG only works on what you've stored. It doesn't fetch anything live. It gives the AI knowledge, but it doesn't give it agency.

What MCP Actually Is: The Smartphone

MCP stands for Model Context Protocol. It is a standardized way for AI models to connect to live systems and use them in real-time. If RAG is a library, MCP is a smartphone. The model can check live data, call APIs, and trigger workflows.

The MCP Specification (The Three Primitives)

The Model Context Protocol operates over JSON-RPC 2.0 and defines three core ways to extend a model:

- Resources: Read-only data sources (e.g., a file, a database table, or a log stream).

- Tools: Executable functions that can perform actions (e.g., "Create Jira Ticket", "Send Slack Message").

- Prompts: Standardized templates that help the model understand how to interact with specific systems.

Architecture: Client-Host-Server

- Client: The user interface (e.g., Claude Desktop, your custom IDE).

- Host: The AI model that consumes the protocol.

- Server: The integration layer you build to expose your data/tools.

Comparison: RAG vs. MCP

| Aspect | RAG (Memory) | MCP (Agency) |

|---|---|---|

| Primary Goal | Long-term knowledge retrieval. | Real-time access and action. |

| Data State | Static (indexed, then queried). | Live (queried in real-time). |

| Latency | Low (milliseconds from vector DB). | Medium/High (limited by API speed). |

| Implementation | Moderate (Chunking, Reranking). | High (Integrations, Security, Auth). |

| Write Access | None. | Full read/write potential. |

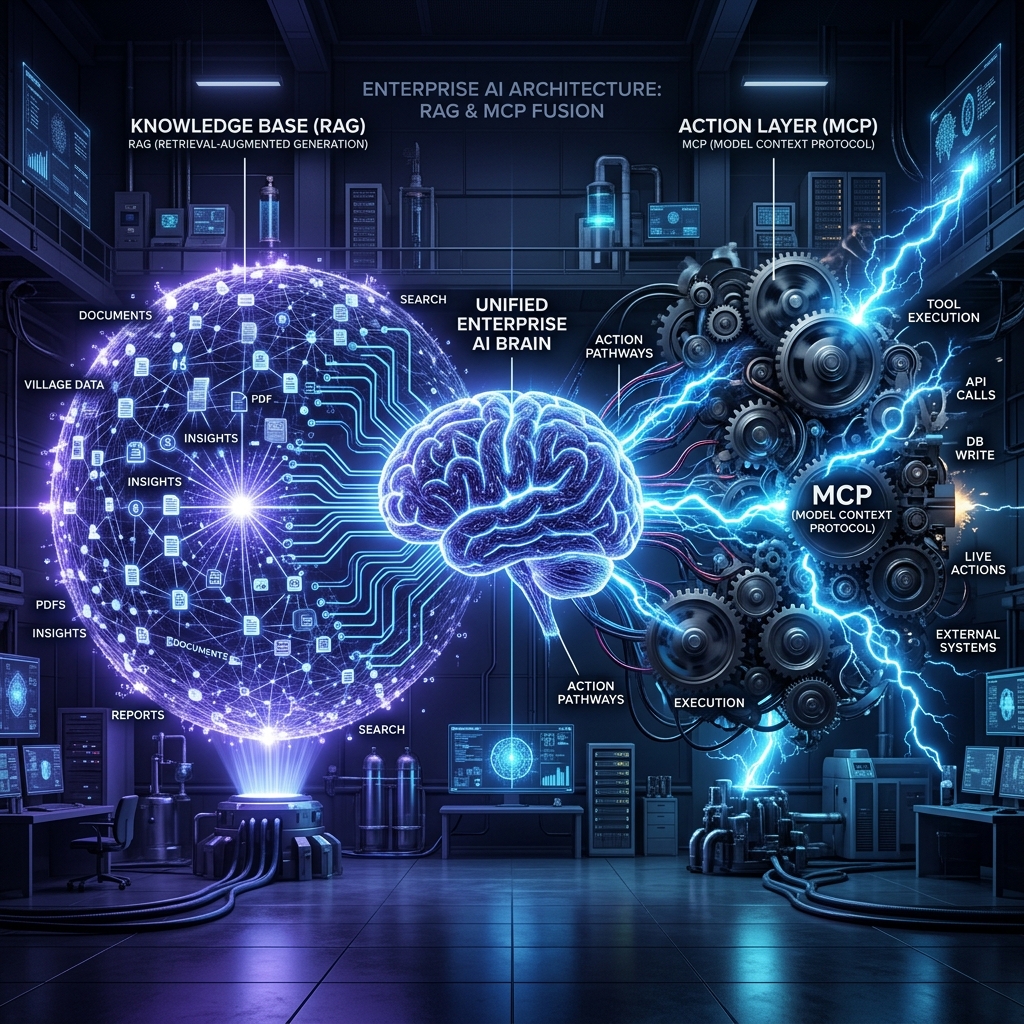

The Unified Architecture: RAG + MCP

In serious production environments, RAG and MCP are the default combined architecture.

The Workflow Case: "What's our return policy for orders over $200, and can you check if order #7821 is eligible?"

- RAG Query: Pull the general return policy from the document store (Memory).

- MCP Call: Look up order

#7821in the CRM in real-time (Agency). - Synthesis: The model chains both results into a coherent, actionable answer.

What Most Tutorials Skip

1. Context Bloat

Combining RAG and MCP can quickly exceed the model's token budget. Effective systems use Context Compression to summarize retrieved documents before passing them to the final reasoning loop.

2. The Trust Model

When an AI can write to your systems via MCP, Human-in-the-Loop (HITL) isn't a feature—it's a security requirement. Write permissions and audit logging must be part of your base architecture.

3. Latency Streaming

RAG retrieval is fast; MCP calls are slow. Your UI must use Streaming Responses to provide immediate feedback while the model waits for live API results.

Conclusion

RAG gives your AI knowledge; MCP gives your AI the ability to act. One handles the past; the other handles the present. Understanding the layer at which each operates is the key to building AI systems that don't just "chat," but actually work.